May 15, 2018

Can Supercomputers Do More for Future Human Resilience Than the Abacus?

Posted by Annika Deurlington

Today’s post is written by David Trossman, Research Associate, University of Texas-Austin’s Institute for Computational Engineering and Sciences

Scientists like Joseph Fourier, John Tyndall, and Eunice Foot made discoveries that led Svante Arrhenius to calculate how doubling the amount of carbon dioxide in the atmosphere would affect global temperatures. This was one of the first qualitatively accurate models of the Earth system. And this was in the 1800s. The additional complexity that has since been accounted for in Earth system models has required extra computational power. Applying Newton’s Laws, the first law of thermodynamics, the ideal gas law, and theories to account for fine-scaled physics/chemistry/biology/geology all at once could no longer be done by a human. Even the rooms full of human calculators were not enough. As a result, scientists needed to develop the mathematical framework to predict the weather with electric brains (aka computers), which they achieved by the 1950s. Rooms full of suped up computers, which we call supercomputers, have since been workhorses for scientists working on the Earth system. Now, supercomputers can perform more than a quadrillion floating point operations every second. Try to do that by hand. The human brain can, in principle, process information at about the same rate, but in practice, we only do maybe ten floating point operations per second because we can’t focus or remember stuff as well as supercomputers.

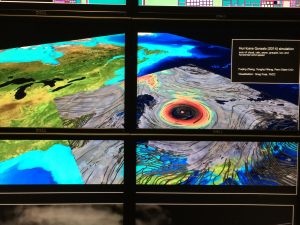

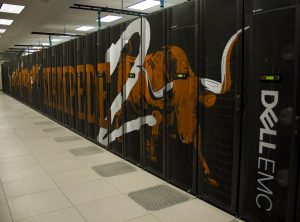

Stampede2, a supercomputer at the Texas Advanced Computing Center. Photo by Sean Cunningham

Supercomputers are now an important part of our science infrastructure because they help us predict risks and save lives. Applications of routine forecasts assist various sectors of our economy make decisions like determining flood insurance rates, managing natural resources (such as crops/water/fish stocks), concealing underwater nuclear weapons, informing shipping and military routes, and predicting how weather events endanger lives and infrastructure (hurricanes/heat waves/floods). Essentially, forecasts empower people with resilience by building or adapting existing infrastructure to be able to withstand the changes to come.

Forecasts depend on Earth observations to be robust because we need to know the conditions of the present to be able to predict the future. Forecasts also require modelers to run many simulations from different starting points and slightly different conditions. By using a range of realistic scenarios, processing the output in a way for humans to understand, and gathering an ever-expanding database of observations to begin the next forecast, another problem arises: disk space. Supercomputers are needed to store all the information required to generate a credible forecast. Forecast output from a single modeling center generates more than 10 trillion bytes of data each day. At that rate, it would not take many days for the disk space of our brains to fill up. Maybe I should speak for myself, but people can have enough difficulty remembering the things they want to remember.

The complexity of models, the computational power of the supercomputers, and the disk space required to store their output have all exponentially increased, and this has had tangible results. Over the past 40 years, a measure of the accuracy of forecasts 3 days ahead of time has increased from about 80% to nearly 99% and that of forecasts 10 days ahead of time has increased from don’t-even-try to nearly 50%. Regional-scale forecasts can be run to construct likelihoods of hurricanes to produce dangerous winds and/or sufficient rain or storm surges under changing sea levels that coastal regions flood or that their freshwater supplies get contaminated with seawater. Information from satellites as well as instruments in the ocean, sticking out of the ocean, and on land is used to calculate these forecasts with supercomputers. While there are still inequitable responses to the hurricanes that cause billions of dollars in damage, hurricanes have been predicted far enough ahead of time using supercomputers that lives were saved.

New models continue to be built for the purpose of predicting ever-more aspects of the relationship between humans and Earth, such as the Energy Exascale Earth System Model’s goal to model not only the Earth’s conditions but also human-built energy infrastructure. This particular model has been built ahead of the super-duper-computers that scientists plan to run it on. This next-generation supercomputer will cost hundreds of millions of dollars, which will require continued federal investment to become a reality. The cost of all supercomputers adds up over the years, but without supercomputers, society would need to resort to prediction methods (ironically locked away in a black box) that were utilized by the Farmer’s Almanac centuries ago. Undoubtedly, the disappearance of supercomputers would translate into not only a financial loss to a more unpredictable world, but more importantly: human loss.